Latency is something that gets mentioned a lot when it comes to stream processing. However, decision makers and IT professionals aren’t always clear on what the implications of latency are when it comes to the total picture.

This is a mistake because latency levels can essentially make or break a data platform once it is launched. Latency is the delay that occurs before a transfer of data begins following the stream toward its landing point.

The reason why paying attention to latency is important in the computing world is that even a delay that lasts for a fraction of a second can have a big impact when large streams of data are involved. You can’t make a good decision based on incomplete data. Latency plays a big role in determining the value of data.

A Closer look at stream processing

You must first understand the goal of stream data processing before you can understand how it relates to latency. The goal of stream processing is to bring data to the real world. This essentially means that a platform should be able to digest, display and react to data on a real-time basis.

The data that is fed into a platform is used as a catalyst to spark reactions and adjustments. Looking at it this way makes it easy to see why latency is such an important aspect of stream processing.

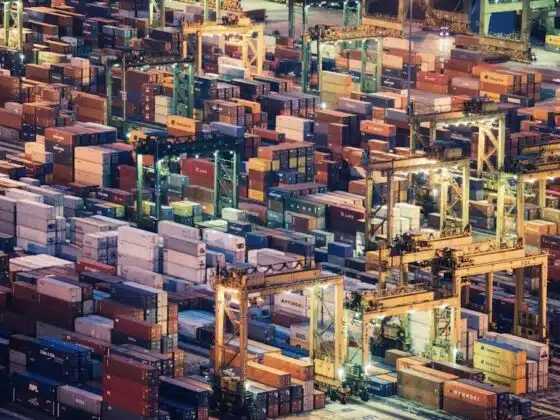

How does this translate to real life in the world around us? Stream processing is the engine behind the beacons that are made for location-based data readings used in security, inventory tracking, customer tracking and in-store marketing.

Looking at latency the right way

The best type of latency is the one that you don’t even notice is there. The goal of customizing a processing platform is to get your latency level as close to zero as possible. The level of latency you can tolerate will depend on the types of applications you’re running.

Many applications require something called sub-second latency. This means that the latency periods experienced must be well below a second in length. A latency level this low would be necessary in software and hardware platforms that handle surveillance and monitoring.

This makes sense when you consider that watching something unfold in real-time isn’t effective unless the related data is actually being displayed in what is as close to real-time as possible. It is also important that the platform you use can maintain the same latency level when spikes in data occur.

A platform needs to be able to adjust to fluxes in data without changing latency times and threatening consistency. Another big factor to consider when looking at latency levels is longevity.

It’s important to make sure that latency levels can maintain consistency for hours, days or weeks at a time while digesting large volumes of data without human direction. This aspect of latency is especially important when you consider the increasing role sensors are playing in our lives.

Choosing a processing platform based on latency

What should you keep in mind while making decisions related to latency? The easiest way to sum things up is to say that a system’s latency level is a lot like the energy level of a person running a marathon. You need a stream process that runs consistently and smoothly as it covers mile after mile of data.

A process that constantly gets caught up in snags is like a runner who stops to catch their breath at random intervals will not do you much good. Choosing a processing solution that doesn’t need to stop to catch its breath constantly as it relates to reacting to data will ensure that you can depend on the outcomes that are produced.